Advanced Technical SEO Strategies for Enterprise Websites: Optimize Crawl Budget, JavaScript Rendering, and Site Architecture

In the realm of enterprise websites, advanced technical SEO strategies are crucial for maintaining visibility and performance in search engine results. This article delves into essential techniques such as crawl budget management, effective site architecture, and addressing JavaScript rendering challenges. By understanding these strategies, businesses can enhance their online presence and ensure that their websites are optimized for both users and search engines. Many enterprises struggle with inefficient crawling and indexing, which can lead to missed opportunities for traffic and conversions. This guide will explore how to optimize crawl budgets, improve site architecture, and tackle JavaScript rendering issues effectively. We will cover the importance of crawl budget management, best practices for site architecture, and solutions for JavaScript rendering challenges, providing a comprehensive overview of technical SEO for enterprise websites.

How Can Crawl Budget Management Improve Enterprise Website SEO?

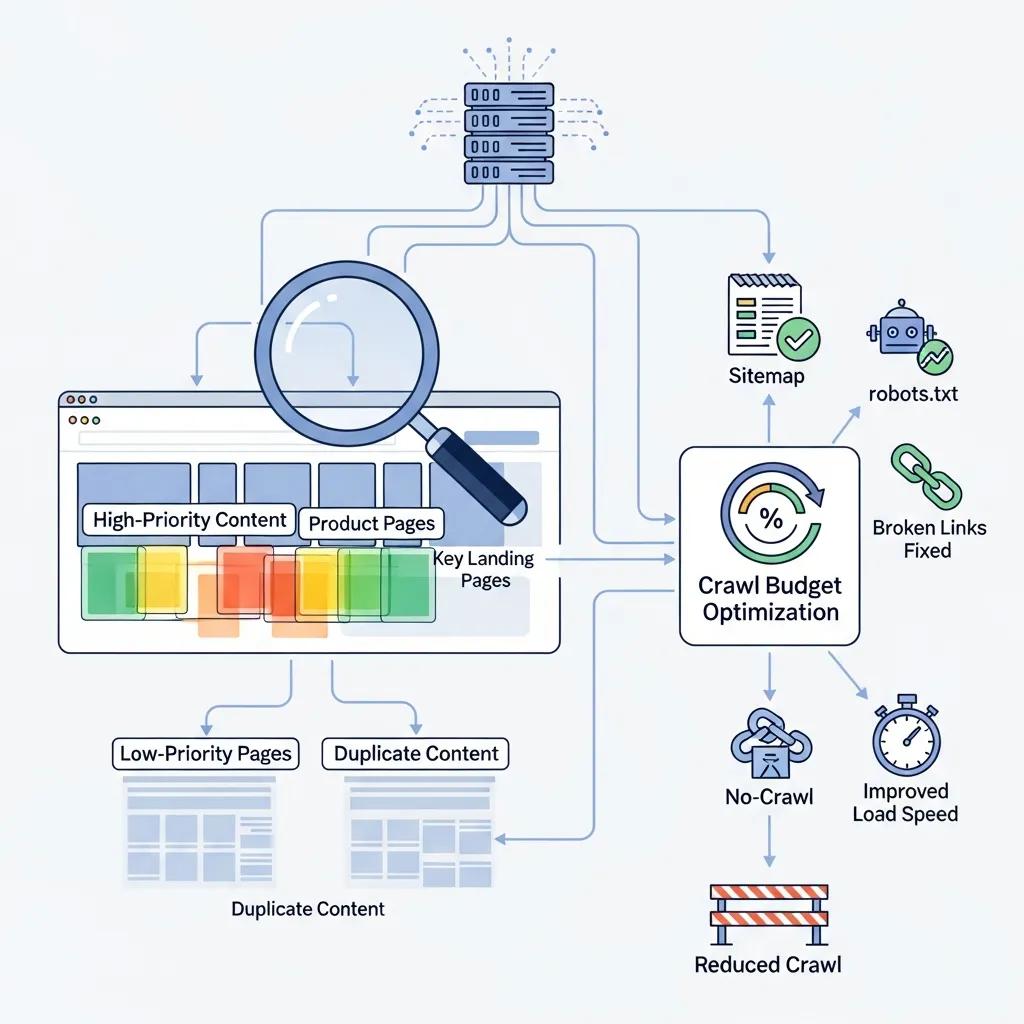

Crawl budget management is a critical aspect of technical SEO that determines how effectively search engines can index a website. By optimizing crawl budgets, enterprises can ensure that search engines focus on their most important pages, improving overall SEO performance. Effective management of crawl budgets can lead to better visibility in search results, increased organic traffic, and enhanced user experience. This is particularly important for large websites with numerous pages, where inefficient crawling can result in missed indexing opportunities.

What Techniques Optimize Crawl Budget for Large Enterprise Sites?

To optimize crawl budgets for large enterprise sites, several techniques can be employed:

- Prioritization of Important Pages: Identifying and prioritizing key pages ensures that search engines crawl the most valuable content first.

- Use of Robots.txt and Sitemaps: Implementing a well-structured robots.txt file and XML sitemaps helps guide search engines to the most important areas of the site.

- Minimizing Duplicate Content: Reducing duplicate content prevents search engines from wasting crawl budget on similar pages.

These techniques collectively enhance the efficiency of search engine crawling, ensuring that critical pages receive the attention they deserve.

How Does AI Enhance Crawl Budget Efficiency?

Artificial intelligence (AI) plays a significant role in optimizing crawl budget efficiency. AI algorithms can analyze user behavior and traffic patterns to prioritize pages that are more likely to convert. This real-time adjustment allows for dynamic optimization of crawl budgets, ensuring that search engines focus on high-value content. Additionally, AI can help identify and resolve issues that may hinder crawling, such as broken links or slow-loading pages, further enhancing the overall efficiency of the crawl process.

Further research highlights how advanced algorithms, including those leveraging AI, can dynamically adjust crawling strategies to improve efficiency and relevance.

Advanced Crawl Budget Management with Adaptive Algorithms

Web crawling is an essential technique for collecting data in various domains, but traditional crawlers often struggle with inefficiencies such as irrelevant data collection, redundant crawling, and resource overuse. This paper explores the enhancement of web crawling efficiency through the integration of adaptive scheduling algorithms and ChatGPT. Adaptive scheduling dynamically prioritizes URLs based on real-time parameters such as relevance, server load, and crawling depth, enabling a more targeted and resource-efficient approach.

Enhancing Web Crawling Efficiency with Adaptive Scheduling Algorithms and ChatGPT Integration, NM Tuan, 2024

What Are the Best Practices for Enterprise Site Architecture?

Effective site architecture is fundamental to SEO scalability and user experience. A well-structured site not only facilitates easier navigation for users but also helps search engines understand the hierarchy and importance of content. Best practices for enterprise site architecture include creating a logical structure, ensuring mobile-friendliness, and optimizing for speed. These elements contribute to a seamless user experience and improved search engine rankings.

How Does Site Architecture Affect SEO Scalability?

Site architecture significantly impacts SEO scalability by influencing how easily search engines can crawl and index a website. A poorly structured site can lead to crawl inefficiencies, where search engines struggle to access important content. Conversely, a well-organized site architecture allows for better indexing and improved visibility in search results. For instance, a clear hierarchy with categorized content can enhance the likelihood of pages being crawled and indexed effectively.

Which Flowcharts Illustrate Effective Enterprise Site Structures?

Flowcharts can be invaluable tools for visualizing effective enterprise site structures. They provide a clear representation of how content is organized and how users navigate through the site. Effective flowcharts typically include:

- Hierarchical Structures: Showcasing the relationship between main categories and subcategories.

- User Journey Mapping: Illustrating how users interact with the site and where they may encounter obstacles.

- Content Relationships: Highlighting connections between related content to enhance internal linking strategies.

These visual aids can help teams identify areas for improvement and ensure that the site architecture supports both user experience and SEO goals.

How to Address JavaScript Rendering SEO Challenges in Enterprise Websites?

JavaScript rendering poses unique challenges for SEO, particularly for enterprise websites that rely heavily on dynamic content. Search engines may struggle to index content that is generated through JavaScript, leading to potential visibility issues. Addressing these challenges is essential for ensuring that all content is accessible to search engines.

What Are Common JavaScript SEO Issues and Their Solutions?

Common JavaScript SEO issues include:

- Indexing Problems: Search engines may not index content that is rendered via JavaScript. Solutions include server-side rendering or pre-rendering to ensure content is accessible.

- Slow Loading Times: Heavy JavaScript can slow down page loading, negatively impacting user experience. Optimizing scripts and reducing unnecessary code can help.

- Crawl Errors: JavaScript can lead to crawl errors if not implemented correctly. Regular audits and testing can identify and resolve these issues.

By addressing these common challenges, enterprises can improve their site’s crawlability and ensure that all content is indexed effectively.

How Does Dynamic Rendering Improve Crawlability?

Dynamic rendering is a technique that can significantly enhance crawlability for JavaScript-heavy websites. This approach involves serving a static HTML version of the page to search engines while delivering the full JavaScript experience to users. This ensures that search engines can access and index the content without encountering rendering issues. The benefits of dynamic rendering include:

- Improved Indexing: Ensures that all content is indexed by search engines, regardless of how it is rendered.

- Faster Crawling: Static HTML pages are typically crawled more quickly than dynamic JavaScript pages.

- Enhanced User Experience: Users still receive the full interactive experience while search engines can effectively index the content.

Dynamic rendering provides a practical solution for enterprises looking to optimize their JavaScript-heavy websites for search engines.

Which Technical SEO Audit Tools Are Essential for Enterprise Scalability?

Conducting regular technical SEO audits is vital for maintaining the health and performance of enterprise websites. Several tools can assist in identifying issues and optimizing site performance. Essential tools for technical SEO audits include:

- Crawl Analysis Tools: These tools help identify crawl errors, broken links, and other issues that may hinder search engine indexing.

- Site Speed Testers: Tools that measure page loading times and provide recommendations for optimization.

- Mobile Usability Testers: Ensuring that the site is mobile-friendly is crucial for user experience and SEO.

These tools collectively support enterprises in maintaining a scalable and efficient online presence.

How Do Server Log Analysis Tools Support Enterprise SEO?

Server log analysis tools are essential for understanding how search engines interact with a website. By analyzing server logs, enterprises can gain insights into:

- Crawl Frequency: Understanding how often search engines visit the site and which pages are crawled.

- Crawl Errors: Identifying issues that may prevent search engines from accessing content.

- User Behavior: Gaining insights into how users navigate the site and where they may encounter obstacles.

This data is invaluable for optimizing crawl budgets and improving overall site performance.

What Automation Strategies Enhance Technical SEO Audits?

Automation can significantly enhance the efficiency of technical SEO audits. Strategies include:

- Automated Reporting: Setting up automated reports to track key SEO metrics and identify issues in real-time.

- Scheduled Crawls: Regularly scheduled crawls can help identify new issues as they arise, ensuring that the site remains optimized.

- Integration with Analytics: Connecting SEO tools with analytics platforms can provide a comprehensive view of site performance and user behavior.

By implementing these automation strategies, enterprises can streamline their SEO efforts and maintain a proactive approach to site optimization.